What is MPEG doing these days?

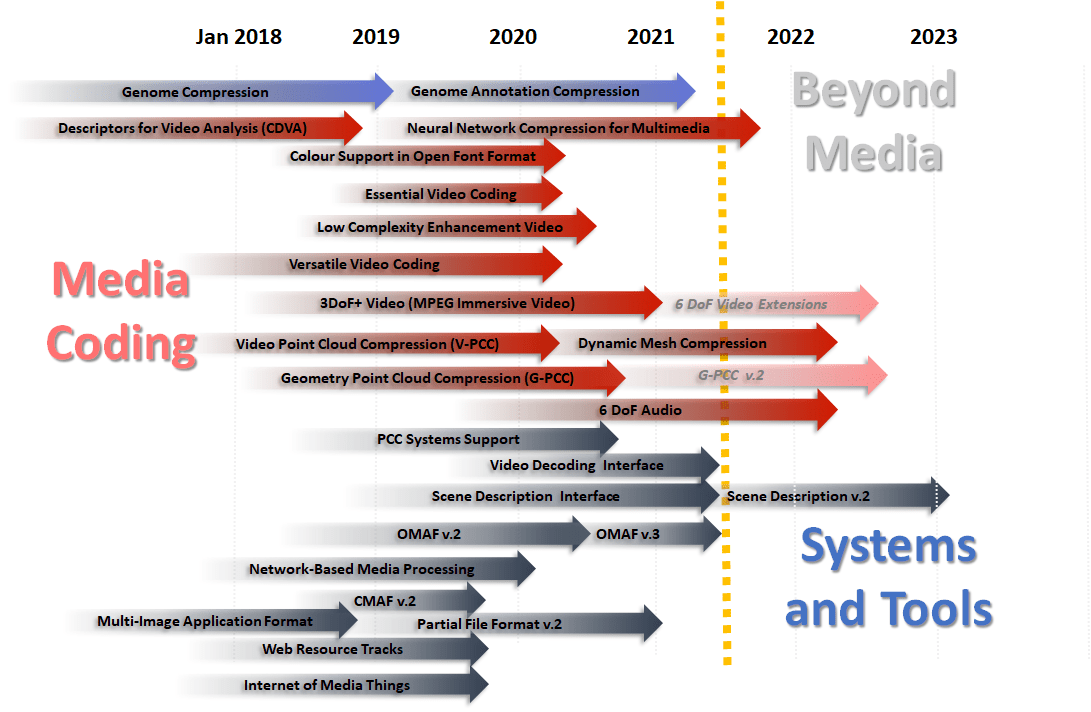

It is a now a few months since I last talked about the standards being developed by MPEG. As the group dynamics is fast, I think it is time to make an update about the main areas of standardisation: Video, Audio, Point Clouds, Fonts, Neural Networks, Genomic data, Scene description, Transport, File Format and API. You will also find a few words on three exploration that MPEG is making Video Coding for Machines MPEG-21 contracts to smart contracts Machine tool data.…