On 19 July 2020 – two years ago – the wild idea of an organisation dedicated to the development of AI-based data coding standards was made public. What has happened in these two years?

- MPAI was established in September 2020.

- Four Calls for Technologies were published in December 2020, and January-February 2021.

- The corresponding four Technical Specifications were published in September-November-December 2021:

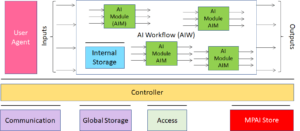

- AI Framework (MPAI-AIF, a standard environment to execute AI workflows composed of AI Modules),

- Compression and Understanding of Industrial Data (MPAI-CUI, standard AI-based financial data processing technologies and their application to Company Performance Prediction).

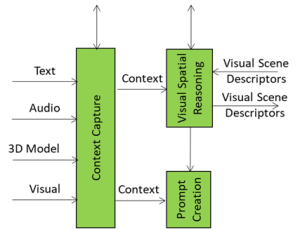

- Multimodal Conversation (MPAI-MMC, standard AI-based human-machine conversation technologies and their application to 5 use cases),

- Context-based Audio Enhancement (MPAI-CAE, standard AI-based audio experience-enhancement technologies and their application to 4 use cases)

- Completion of the set of specifications composing an MPAI standard, namely: Reference Software, Conformance Testing and Performance Assessment in addition to Technical Specification. So far this has been partly achieved.

- IEEE adoption without modification of the Technical Specifications. The first MPAI technical specification converted to an IEEE standard is expected to be approved in the second half of September 2022.

- Publication of three Calls for Technologies and associated Functional and Commercial Requirements for data formats and technologies:

- The extended AI Framework standard (MPAI-AIF V2) will retain the functionalities specified by Version 1 and will enable the components of the Framework to access security functionalities. See 1 min video: YT https://bit.ly/3aWjgNt Non YT https://bit.ly/3PlNYhL

- The extended Multimodal Conversation standard (MPAI-MMC V2) will enable a variety of new use cases such as separation and location of audio-visual objects in a scene (e.g., human beings, their voices and generic objects); the ability of a party in metaverse1 to import an environmental setting and a group of avatars from metaverse2; representation and interpretation of the visual features of a human to extract information about their internal state (e.g., emotion) or to accurately reproduce the human as an avatar. See 2 min video: YThttps://bit.ly/3PGRAL7 non-YT https://bit.ly/3PK3kwi

- The Neural Network Watermarking standard (MPAI-NNW) will provide the means to assess if the insertion of a watermark deteriorates the performance of a neural network; how well a watermark detector can detect the presence of a watermark and a watermark decoder can retrieve the payload; and how to quantify the computational cost to inject, detect, and decode a payload. See 1 min video: YT https://bit.ly/3PL28ZB non YT https://bit.ly/3Omxx3y

- Finally, MPAI has decided to establish the MPAI Store. This is the place where implementations of MPAI technical specifications will be submitted, validated, tested, and made available for download.

A short life with many results. Much more to accomplish.