Introduction

In Life inside MPEG I have described the breadth, complexity and interdependence of the work program managed by the MPEG ecosystem that has fed the digital media industry for the last 30 years. I did not mention, however, the actual amount of work developed by MPEG experts during an MPEG meeting. Indeed, at every meeting, the majority of work items listed in the post undergo a review prompted by member submissions. At the Macau meeting in October 2018, there were 1238 such documents covering the entire work program described in the post, actually more because that covers only a portion of what we are actually doing .

What I presume readers cannot imagine – and I do not claim that I do much more than they do – is the amount of work that individual companies perform to produce their submissions. Some documents may be the result of months of work often based on days/weeks/months of processing of audio, video, point clouds or other types of visual test sequences carried out by expensive high performance computers.

It is clear why this is done, at least in the majority of cases: the discovery of algorithms that enable better and/or new audio-visual user experiences may trigger the development and deployment of highly rewarding products, services and applications. By joining the MPEG process, such algorithms may become part of one or more standard helping the industry develop top performance _interoperable_ products, services and applications.

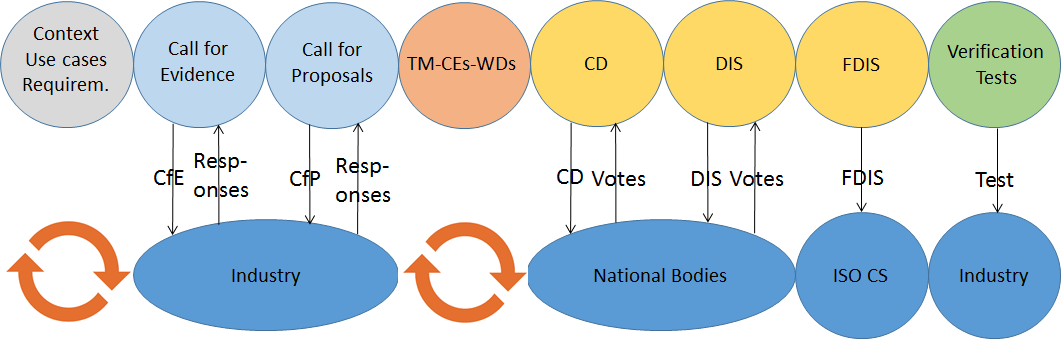

But what is the _MPEG process_? In this post I would like to answer this question. As I may not be able to describe everything, I will likely have to revisit this issue in the future. In any case the figure below should be used as a guide in the following.

How it all starts

The key players of the MPEG process are the MPEG members: currently ~1,500, ~1/4 are from academia and ~3/4 from industry, of which ~500 attend quarterly MPEG meetings.

Members bring ideas to MPEG for presentation and discussion at MPEG meetings. If the idea is found interesting and promising, an ad hoc group is typically established to explore further the opportunity until the next meeting. The proposer of the idea is typically appointed as chair of the ad hoc group. In this way MPEG offers the proposer the opportunity to become the entretrepreneur who can convert an idea into a product, I mean, an MPEG standard.

To get to a standard it may take some time: the newer the idea, the more time it may take to make it actionable by the MPEG process. After the idea has been clarified, the first step is to understand: 1) the context for which the idea is relevant, 2) the “use cases” the idea offers advantages for and 3) the requirements that a solution should satisfy to support the use cases.

Even if idea, use context, use cases and requirements have been clarified, it does not mean that technologies necessarily exist out there that can be assembled to provide the needed solution. For this reason, MPEG typically produces and publishes a Call for Evidence (CfE) – sometimes more than one – with attached context, use cases and requirements. The CfE requests companies who think they have technologies satisfying the requirements to demonstrate what they can achieve, without requesting them to describe how the results have been achieved. In many cases respondents are requested to use specific test data to facilitate comparison. If MPEG does not have them, it will ask industry to provide them.

Testing technologies for the idea

If the result of the CfE is positive, MPEG will move to the next step and publish a Call for Proposals (CfP), with attached context, use cases, requirements, test data and evaluation method. The CfP requests companies who have technologies satisfying the requirements to submit responses to the CfP where they demonstrate the performance _and_ disclose the exact nature of the technologies that achieve the demonstrated results.

Let’s see how this process has worked in the specific case of neural network compression. Note that the last row in italic refers to a future meeting. Links point to public documents on the MPEG web site.

Mtg | YY | MM | Actions |

| 120 | 17 | Oct |

|

| 121 | 18 | Jan |

|

| 122 | 18 | Apr |

|

| 123 | 18 | Jul |

|

| 124 | 18 | Oct |

|

| 126 | 19 | Mar |

|

We can see that Use Cases and Requirements are updated at each meeting and made public, Test data are requested to the industry and the Evaluation Framework is developed well in advance of the CfP. In this particular case it will take 18 months just to move from the idea to CfP responses.

MPEG gets its hands on technology

A meeting where CfP submissions are due is typically a big event for the community involved. Knowledgeable people say that such a meeting is more intellectually rewarding than attending a conference. How could it be otherwise if participants not only can understand and discuss the technologies but also see and judge their actual performance? Everybody feels like being part of an engaging process of building a “new thing”.

If MPEG comes to the conclusion that the technologies submitted and retained are sufficient to start the development of a standard, the work is moved from the Requirements subgroup, which typically handles the process of moving from idea to proposal submission and assessment, to the appropriate technical group. If not, the idea of creating a standard is – maybe temporarily – dropped or further studies are carried out or a new CfP is issued calling for the missing technologies.

What happens next? The members involved in the discussions need to decide which technologies are useful to build the standard. Results are there but questions pop up from all sides. Those meetings are good examples of the “survival of the fittest” principle, applied to technologies as well as to people.

Eventually the group identifies useful technologies from the proposals and builds an initial Test Model (TM) of the solution. This is the starting point of a cyclic process where MPEG experts

- Identify critical points of the RM

- Define which experiments – called Core Experiments (CE) – should be carried out to improve TM performance

- Review members’ submissions

- Review CE technologies and adopt those bringing sufficient improvements.

At the right time (which may very well be the meeting where the proposals are reviewed or several meetings after), the group produces a Working Draft (WD). The WD is continually improved following the 4 steps above.

The birth of a “new baby” is typically not without impact on the whole MPEG ecosystem. Members may wake up to the need to support new requirements or they realise that specific applications may require one or more “vehicle” to embed the technology in those application or they come to the conclusion that the originally conceived solution needs to be split in more than one standard.

These and other events are handled by convening joint meetings between the group developing the technology and all technical “stakeholders” in other groups.

The approval process

Eventually MPEG is ready to “go public” with a document called Committee Draft (CD). However, this only means that the solution is submitted to the national standard organisations – National Bodies (NB) – for consideration. NB experts vote on the CD with comments. If a sufficient number of positive votes are received (this is what has happened for all MPEG CDs so far), MPEG assesses the comments received and decides on accepting or rejecting them one by one. The result is a new version of the specification – called Draft International Standard (DIS) – that is also sent to the NBs where it is assessed again by national experts, who vote and comment on it. MPEG reviews NB comments for the second time and produces the Final Draft International Standard. This, after some internal processing by ISO, is eventually published as an International Standard.

MPEG typically deals with complex technologies that companies consider “hot” because they are urgently needed for their products. As much as in companies the development of a product goes through different phases (alpha/beta releases etc., in addition to internal releases), achieving a stable specification requires many reviews. Because CD/DIS ballots may take time, experts may come to a meeting reporting bugs found or proposing improvements to the document under ballot. To take advantage of this additional information that the group scrutinises for its merit, MPEG has introduced an unofficial “mezzanine” status called “Study on CD/DIS” where proposals for bug fixes and improvements are added to the document under ballot. These “Studies on CD/DIS” are communicated to the NBs to facilitate their votes on the official documents under ballot.

Let’s see in the table below how this part of the process has worked for the Point Cloud Compression (PCC) case. Only the most relevant documents have been retained. Rows in italic refer to future meetings.

Mtg | YY | MM | Actions |

| 120 | 17 | Oct | 1. Approval of Report on PCC CfP responses 2. Approval of 7 CEs related documents |

| 121 | 18 | Jan | 1. Approval of 3 WDs 2. Approval of PCC requirements 3. Approval of 12 CEs related documents |

| 122 | 18 | Apr | 1. Approval of 2 WDs 2. Approval of 22 CEs related documents |

| 123 | 18 | Jul | 1. Approval of 2 WDs 2. Approval of 27 CEs related documents |

| 124 | 18 | Oct | 1. Approval of V-PCC CD 2. Approval of G-PCC WD 3. Approval of 19 CEs related documents 4. Approval of Storage of V-PCC in ISOBMFF files |

| 128 | 19 | Oct | 1. Approval of V-PCC FDIS |

| 129 | 20 | Jan | 1. Approval of G-PCC FDIS |

I would like to draw your attention to “Approval of 27 CEs related documents” in the July 2018 row. The definition of each of these CE documents requires lengthy discussions by involved experts because they describe the experiments that will be carried out by different parties at different locations and how the results will be compared for a decision. It should not be a surprise if some experts work from Thursday until Friday morning to get documents approved by the Friday plenary.

I would also like to draw your attention to “Approval of Storage of V-PCC in ISOBMFF files” in the October 2018 row. This is the start of a Systems standards that will enable the use of PCC in specific applications, as opposed to being just a data compression specification.

The PCC work item is currently seeing the involvement of some 80 experts. If you think that there were more than 500 experts at the October 2018 meeting, you should have a pretty good idea of the complexity of the MPEG machine and the amount of energies poured in by MPEG members.

The MPEG process – more than just a standard

In most cases MPEG performs “Verification Tests” on a standard produced to provide the industry with precise indications of the performance that can be expected from an MPEG compression standard. To do this the following is needed: specification of tests, collection of appropriate test material, execution of reference or proprietary software, execution of subjective tests, test analysis and reporting.

Very often, as a standard takes shape, new requirements for new functionalities are added. They become part of a standard either through the vehicle of “Amendments”, i.e. separate documents that specify how the new technologies can be added to the base standard, or “New Editions” where the technical content of the Amendment is directly integrated into the standard in a new document.

As a rule MPEG develops reference software for its standards. The reference software has the same normative value as the standard expressed in human language.

MPEG also develops conformance tests, supported by test suites, to enable a manufacturer to judge whether its implementation of 1) an encoder produces correct data by feeding them into the reference decoder or 2) a decoder is capable of correctly decoding the test suites.

Finally it may happen that bugs are discovered in a published standard. This is an event to be managed with great attention because industry may already have released some implementations.

Conclusions

MPEG is definitely a complex machine because it needs to assess if an idea is useful in the real world, understand if there are technologies that can be used to make a standard supporting that idea, get the technologies, integrate them and develop the standard. Often it also has to provide integrated audio-visual solutions where a line up of standards nicely fit to provide a system specification. At every meeting MPEG works simultaneously on tens of intertwined activities taking place at the same time.

MPEG needs to work like a company developing products, but it is not a company. Fortunately one can say, but also unfortunately because it has to operate under strict rules. ISO is a private organisation, but it is international in scope.

Posts in this thread (in bold this post)

- The MPEG ecosystem

- Why is MPEG successful?

- MPEG can also be green

- The life of an MPEG standard

- Genome is digital, and can be compressed

- Compression standards and quality go hand in hand

- Digging deeper in the MPEG work

- MPEG communicates

- How does MPEG actually work?

- Life inside MPEG

- Data Compression Technologies – A FAQ

- It worked twice and will work again

- Compression standards for the data industries

- 30 years of MPEG, and counting?

- The MPEG machine is ready to start (again)

- IP counting or revenue counting?

- Business model based ISO/IEC standards

- Can MPEG overcome its Video “crisis”?

- A crisis, the causes and a solution

- Compression – the technology for the digital age

- On my Charles F. Jenkins Lifetime Achievement Award

- Standards for the present and the future