What is data

Data can be defined as the digital representation of an entity. The entity can have different attributes: physical, virtual, logical or other.

A river may be represented by its length, its average width, its max, min, average flow, the coordinates of its bed from the source to its mouth and so on. Typically, different data of an entity are captured depending on the intended use of the data. If the use of a river data is for agricultural purposes, the depth of the river, the amount of flow during the seasons, the width, the nature of the soil etc. are likely to be important.

Video and audio intended for consumption by humans are data types characterised by a large amount of data, typically samples of the visual and audio information: tens/hundreds/thousands/millions of Mbit/s for video, and tens/hundreds/thousands of kbit/s for audio.

If we exclude niche cases, this amount of data is unsuited to storage and transmission.

High-speed sequencing machines produce snapshots of randomly taken segments with unknown coordinates of a DNA sample. As the “reading” process is noisy, the value of each nucleotide is assigned a “quality value” typically expressed by an integer. As a nucleotide must be read several tens of times to achieve a sufficient degree of confidence, the size of whole genome sequencing files may reach Terabytes. This digital representation of a DNA sample made of unordered and unaligned reads is costly to storage and transmission but is also ill-suited to extracting vital information for a medical doctor to make a diagnosis.

Data and information

Data become information when their representation makes them suitable to a particular use. Tens of thousands of researcher-years were invested in studying the problem and finding practical ways conveniently to represent audio and visual data.

For several decades, facsimile compression became the engine that drove efficiency in the office by offering high quality prints at 1/6 of the time taken by early analogue facsimile machines.

Reducing the audio data rate by a factor of 10-20 preserving the original quality, as offered by MP3 and AAC, changed the world of music forever. Reducing the video data rate by a factor of 1,000, as achieved by the latest MPEG-I VVC standard, multiplies the way humans can have visual experiences. Surveillance applications developed alternative ways to represent audio and video that allowed, for instance, event detection, object counting etc.

The MPEG-G standard, developed to convert DNA reads into information that needs less bytes to be represented, also gives easier access to information that is of immediate interest to a medical doctor.

These examples of transformation of data from “dull” into “smart” collections of numbers has largely been achieved by using the statistical properties of the data or their transformations.

Although quite dated, the method used to compress facsimile information is emblematic. The Group 3 facsimile algorithm defines two statistical variables: the length of “white” or “black” runs, i.e. the number of white/black points following and including the first white/black point after a black point) until a black/white point is encountered. A machine that had been trained to read billions of pages could develop an understanding of how business documents are typically structured and probably be able to use less bits (and probably more meaningful to an application) to represent a page of a business document.

CABAC is another more sophisticated example of data compression using statistical method. CA in CABAC stands for “Context-Adaptive”, i.e. the code set is adapted to the local statistics and B stands for Binary, because all variable are converted to and handled in binary form. CABAC can be applied to variables which do not have uniform statistical characteristics. A machine that had been trained by observing how the variable changes depending on other parameters should probably be able to use less and more meaningful bits to represent the data.

The end of data coding as we know it?

Many approaches at data coding were developed having in mind to exploit some statistical properties in some data transformations. The two examples given above show that Machine Learning can probably be used to provide more efficient solutions to data coding than traditional methods could provide.

It should be clear that there is no reason to stay confined to the variables exploited in the “statistical” phase of data coding. There are probably better ways to use machines’ learning capabilities on data processed by different methods.

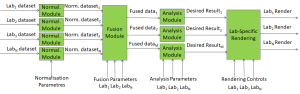

The MPAI workplan is not set yet, but one proposal is to investigate, on the one hand, how far artificial intelligence can be applied to the “old” variables and how far artificial intelligence applied to fresh approaches can transform data into better usable information. MPAI standards will follow based on the results.

Smart application of Artificial Intelligence promises to do a better job in converting data into information than statistical approaches have done so far.

Posts in this thread

- Better information from data

- An analysis of the MPAI framework licence

- MPAI – do we need it?

- New standards making for a new age

- The MPEG to Industry Hall of fame

- This is ISO – An incompetent organisation

- This is ISO – An obtuse organisation

- What to do with a jammed machine?

- Stop here if you want to know about MPEG (†)

- This is ISO – A hypocritical organisation

- The MPEG Hall of fame

- Top-down or bottom-up?

- This is ISO – A chaotic organisation

- A future without MPEG

- This is ISO – A feudal organisation