Video in MPEG has a long history

MPEG started with the idea of compressing the 216 Mbit/s of standard definition video, and the associated 1.41 Mbit/s of stereo audio, for interactive video applications on Compact Disc (CD). That innovative medium of the early 1980s was capable to provide a sustained bitrate of 1.41 Mbit/s for about 1 hour. The bitrate was expected to accommodate both the video and audio information. At about the same time, some telco research laboratories were working on an oddly named technology called Asymmetric Digital Subscriber Line (ADSL), in other words, a modem for high-speed (at that time) transmission of ~1.5 Mbit/s for the “last mile”, but only from the telephone exchange to the subscriber’s network termination. In the other direction, only a few tens of kbit/s were supported.

Therefore, if we exclude a handful of forward-looking broadcasters, the MPEG-1 project was really a Consumer Electronics – Telco project.

Setting aside the eventual success of the MPEG-1 standard – Video CD (VCD) used MPEG-1 and 1 billion player were produced in total, hence a different goal than the original interactive video – MPEG-1 was a remarkable success for being the first toidentify an enticing business case for video (and audio) compression, and systems, on top of which tens of successful MPEG standards were built over the years.

This article has many links

In Forty years of video coding and counting I have recounted the full story of video coding and in The MPEG drive to immersive visual experiences I have focused on the efforts MPEG has made, since its early years, to provide standards for an extended 3D visual experience. In Quality, more quality and more more quality I have described how MPEG uses and innovates subjective quality assessment methodologies to develop and eventually certify the level of performance of a visual coding standard. In On the convergence of Video and 3D Graphics I have described the efforts MPEG is making to develop a unified framework that encompasses a set of video sources producing pixels and a set of sensors producing points. In More video with more features I described how MPEG has been able to support more video features in addition to basic compression.

Now to the question

Seeing all this, the obvious question a reader might ask could be: if MPEG has done so much in the area of visual information coding standards, does MPEG still have much to do in that space? A reader with only a superficial understanding of the force that drives MPEG should probably know the answer, but I am not going to give it right now. I will first argument what I see as the future of MPEG in this area.

I need to make a disclaimer first. The title of this article is “The future of visual information coding standards”, but I should restrict the scope to “dynamic (i.e. time dependent) visual information coding”. Indeed the coding of still pictures is a different field of emdeavour serving the needs of a different industry with a different business model. It should not be a surprise if the two JPEG standards – the original JPEG and JPEG 2000 – have both a baseline mode (the only one that is actually used) which is Option 1 (ISO/IEC/ITU language for “royalty free”). It should also be no surprise to see that, while it is conceivable to think of a standard for holographic still image coding, holography is not even mentioned in this article.

There was always a need for new video codecs

Forty years of video coding and counting explains the incredible decade-long ride to develop video coding standards all based on the same basic ideas enriched at each generation, that will enable the industry to achieve a bitrate reduction of 1,000 from video PCM samples to compressed bitstream with the availability of the latest VVC video compression standard, hopefully in the second half of 2020 (the incertainty is caused by the current Covid-19 pandemic which is taking its toll on MPEG as well).

The need for new and better compression standards, when technology makes it possible and the improvement over the latest existing standard justifies it, has been justified by the push toward higher resolution, colour, bit-per-pixel, dynamic range, viewing angle etc. video and the lagging availability of a correspondingly higher bitrate to the end user.

The push toward “higher everything” will continue, but will the bitrate made available to the end user continue to lag?

The safe answer is: it will depend. It is a matter of fact that bandwidth is not an asset uniformly available in the world. In the so-called advanced economies the introduction of fibre to the home or to the curb continues apace. The global 5G services market size is estimated to reach 45.7 B$ by 2020 and register a CAGR of 32.1% from 2021 to 2025 reaching ~184 B$. Note that 2025 is the time when MPEG should think seriously about a new video coding standard. The impact of the current pandemic could further accelerate 5G deployment.

More video and which codecs

The first question is whether there will be room for a new video coding standard. My answer is yes and for at least two reasons. The first is socio-economic: the amount of world population that is served by a limited amount of bandwidth will remain large while the desire to enjoy the same level of experience of the rest of the world will remain high. The second is technical: currently, efficient 3D video compression is largely dependent on efficient 2D video compression.

The second question is more tricky. Will this new (after VVC) 2D video compression standard still be another extension of the motion compensated prediction scheme? I am sure that the answer could be yes. The prowess of the MPEG community is such that another 50% improvement could well be provided. I am not sure that will happen, though. Machine learning applied to video coding is showing that significant improvements over state-of-the-art video compression can be obtained by replacing components of existing schemes with Neural Networks (NN), or even by defining entirely new NN-based architectures.

The latter approach has several aspects that make it desirable. The first is that a NN is trained for a certain purpose but you can always trained it better, possibly at the cost of making it heavier. Neural Network Compression (NNC), another standard MPEG is working on, could further extend the range of incrementally improving the performance of a video coding standard, without changing the standard, by making components of the standard downloadable. Another desirable aspect is that media devices will become more and more addicted to using Artificial Intelligence (AI)-inspired technologies. Therefore a NN-based video codec could simply be more attractive for a device implementor because the basic processing architectures are shared amongst a larger number of data types.

New types of video codec

There is another direction that needs to considered in this context and that is the large and growing quantity of data that are being and will be produced by connected vehicles, video surveillance, smart cities etc. In most cases today and more so in the future, it is out of question to have humans at the other side of the transmission channel watching what is being transmitted. More likely there will be machines that will monitor what happens. Therefore, the traditional video coding scenario that aims to achieve the best video/image under certain bit-rate constraints having humans as consumption targets is inefficient and unrealistic in terms of latency and scale when the consumption target is a machine.

Video Coding for Machines (VCM) is the title of an MPEG investigation that seeks to determine the requirement for this novel, but not entirely new video coding standard. Indeed, the technologies standardised by MPEG-7 – efficiently compressed image and video descriptors and description schemes – belong to the same category as VCM. It must be said, however, that 20 years have not passed in vain. It is expected that all descriptors will be the output of one or more NNs.

One important requirement is the fact that while millions of streams may be monitored by machines, some streams may need to be monitored by humans as well, possibly after having been alerted by a machine. Therefore VCM is linked to the potential new video coding I have talked about above. The question is whether VCM should be called HMVC (Human-Machine Video Coding) or there should be VCM (where the human part remains below threshold in terms of priority) and YAVC (Yet Another Video Coding, where the user is meant to be a human).

Immervice video codecs

The MPEG drive to immersive visual experiences shows that MPEG has always been fascinated by immersive video. The fascination is not fading away as shown by the fact that, in four months MPEG plans to release the Video-based Point Cloud Compression standard and in a year the MPEG Immersive Video standard.

These standards, however, are not the end points, but the starting points in the drive to more rewarding user experiences. Today we cannot say how and when MPEG standards will be able to provide full navigation in a virtual space. However, that remains the goal for MPEG. Reaching that goal will also depend on the appearance of new capture and display technologies.

Conclusions

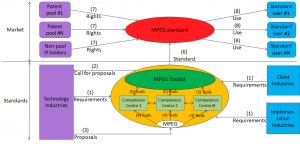

The MPEG war machine will be capable to deliver the standards that will keep the industry busy developing the products and the service that will enhance the user experience. But we should not forget an important element: the need to adapt MPEG’s business model to the new age.

MPEG needs to adapt, not change its business model. If MPEG has been able to sustain the growth of the media industry, it is because it has provided opportunities to remunerate the good Intellectual Property that is part of its standards.

There are other business models appearing. The MPEG business model has shown its worth for the last 30 years. It can do the same for another 30 years if MPEG will be able to develop a strategy to face and overcome the competition to its standards.

Posts in next thread

Posts in this thread

- The future of visual information coding standards

- Can MPEG survive? And if so, how?

- Quality, more quality and more more quality

- Developing MPEG standards in the viral pandemic age

- The impact of MPEG on the media industry

- MPEG standards, MPEG software and Open Source Software

- The MPEG Metamorphoses

- National interests, international standards and MPEG

- Media, linked media and applications

- Standards and quality

- How to make standards adopted by industry

- MPEG status report (Jan 2020)

- MPEG, 5 years from now

- Finding the driver of future MPEG standards

- The true history of MPEG’s first steps

- Put MPEG on trial

- An action plan for the MPEG Future community

- Which company would dare to do it?

- The birth of an MPEG standard idea

- More MPEG Strengths, Weaknesses, Opportunities and Threats

- The MPEG Future Manifesto

- What is MPEG doing these days?

- MPEG is a big thing. Can it be bigger?

- MPEG: vision, execution,, results and a conclusion

- Who “decides” in MPEG?

- What is the difference between an image and a video frame?

- MPEG and JPEG are grown up

- Standards and collaboration

- The talents, MPEG and the master

- Standards and business models

- On the convergence of Video and 3D Graphics

- Developing standards while preparing the future

- No one is perfect, but some are more accomplished than others

- Einige Gespenster gehen um in der Welt – die Gespenster der Zauberlehrlinge

- Does success breed success?

- Dot the i’s and cross the t’s

- The MPEG frontier

- Tranquil 7+ days of hard work

- Hamlet in Gothenburg: one or two ad hoc groups?

- The Mule, Foundation and MPEG

- Can we improve MPEG standards’ success rate?

- Which future for MPEG?

- Why MPEG is part of ISO/IEC

- The discontinuity of digital technologies

- The impact of MPEG standards

- Still more to say about MPEG standards

- The MPEG work plan (March 2019)

- MPEG and ISO

- Data compression in MPEG

- More video with more features

- Matching technology supply with demand

- What would MPEG be without Systems?

- MPEG: what it did, is doing, will do

- The MPEG drive to immersive visual experiences

- There is more to say about MPEG standards

- Moving intelligence around

- More standards – more successes – more failures

- Thirty years of audio coding and counting

- Is there a logic in MPEG standards?

- Forty years of video coding and counting

- The MPEG ecosystem

- Why is MPEG successful?

- MPEG can also be green

- The life of an MPEG standard

- Genome is digital, and can be compressed

- Compression standards and quality go hand in hand

- Digging deeper in the MPEG work

- MPEG communicates

- How does MPEG actually work?

- Life inside MPEG

- Data Compression Technologies – A FAQ

- It worked twice and will work again

- Compression standards for the data industries

- 30 years of MPEG, and counting?

- The MPEG machine is ready to start (again)

- IP counting or revenue counting?

- Business model based ISO/IEC standards

- Can MPEG overcome its Video “crisis”?

- A crisis, the causes and a solution

- Compression – the technology for the digital age